The human immune system is a powerful and complex defense network designed to protect the body from infections, viruses, bacteria, and harmful substances.

Hematology Diagnosis and Evaluation: A Complete Guide to Blood Health

Blood plays a vital role in keeping the human body healthy. It carries oxygen, nutrients, hormones, and immune cells throughout the body while removing waste products.

Hematology Symptoms and Risk Factors: A Complete Informative Guide

Hematology is a specialized branch of medicine that focuses on blood, blood-forming organs, and blood-related diseases.

Hematology Recovery and Follow-up: A Complete Guide to Post-Treatment Care

Hematological conditions, whether benign or cancer-related, often require specialized medical care, advanced treatments, and long-term monitoring. However, treatment is only one part of the journey.

Hematology treatment and management: A Complete Guide to Blood Health

Hematology is a specialized branch of medicine focused on the study, diagnosis, treatment, and prevention of blood disorders. Blood plays a vital role in transporting oxygen, fighting infections, and maintaining overall body balance.

Why Some Knee Injuries Heal Slowly and How to Speed Recovery

It often starts with a small incident. A twist during exercise, an awkward movement, or a slip on a wet surface. The initial pain may ease, but the discomfort often lasts longer than expected.

How to Help a Loved One Manage Swallowing Difficulties

Swallowing difficulties, formally called dysphagia, can significantly affect a person's ability to eat and drink normally.

How Remote Patient Monitoring Solutions are Transforming Patient Care?

Healthcare has always been a relationship game, about timing, trust and good information, yet over decades we have been compelled to provide services based on snapshots of how a particular patient lives on a daily basis

Five Scenarios Where Physician Advisor Escalation Prevents Rework

Admission status questions, medical necessity reviews, and payer requests place steady demands on hospital teams.

3 Important Healthcare Considerations That Often Go Overlooked in the US

In the United States, conversations about healthcare often center on insurance costs, access to providers, and managing chronic conditions.

5 Hormone-Related Symptoms Women in Warner Robins GA Shouldn’t Ignore

Many women in Warner Robins, GA notice changes that don’t quite add up—feeling drained even after a good night’s sleep, mood shifts that seem out of character, or weight changes that won’t budge despite healthy routines.

How Mental Health Support Can Boost Everyday Life

When you or someone close to you is struggling with emotional wellbeing, seeking professional help can make a real difference.

Leveraging MyCPR NOW for All Your Training Needs

In today’s world, knowing how to respond to emergencies is essential, whether at home, in the workplace, or in public settings. CPR and first aid skills can save lives, and staying up-to-date with the latest techniques is vital for personal safety and job requirements.

Improving Eye Care Through Accurate Testing Technology

When eye care professionals need reliable tools to assess patients’ visual abilities, having access to modern equipment is essential.

Helping Seniors Live Comfortably With Fecal Incontinence

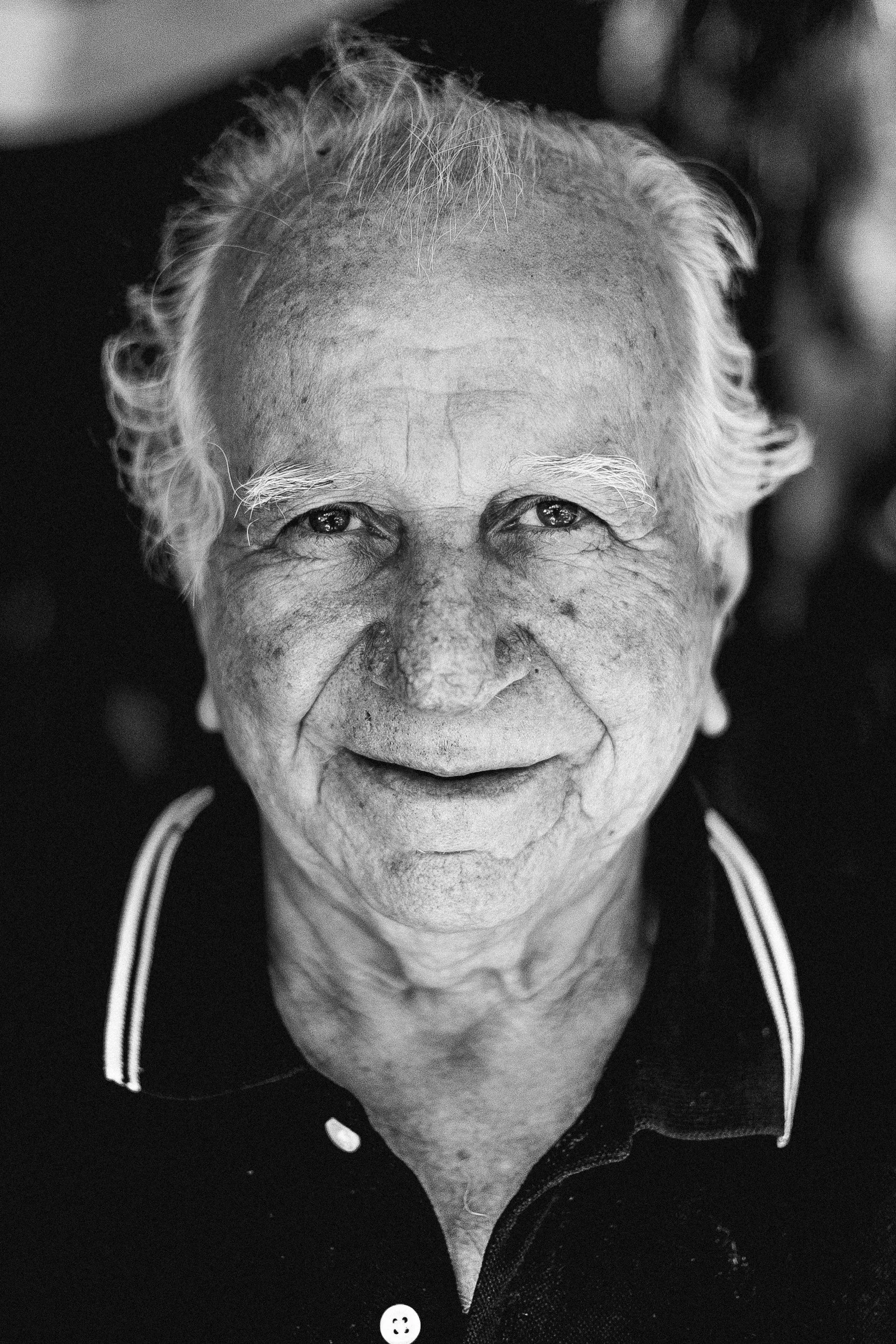

Many seniors live vibrantly until an unexpected health hurdle shakes confidence — fecal incontinence. The condition ranges from minor leakage to total loss of bowel control, and silence around it deepens isolation.